I play mostly in dynasty leagues, and as a result I spend a disproportionate amount of time thinking about how players age. Even in redraft leagues, though, age works its way into our meticulous preparations. How much do Frank Gore and Andre Johnson have left in the tank for Indianapolis? What can we expect Adrian Peterson to bring to the table?

I’m not someone who is content just to think about something. I’m a fidgeter, a fiddler, a picker, a poker. I like to turn over my thoughts as I think them and think about how I am thinking about them.

As I thought about how I thought about aging, I became more and more concerned that my entire approach was fundamentally flawed. What began as a tiny doubt grew over years into a hesitant theory, thence into a partially-baked hypothesis, on into a full-blown belief.

And as I collected enough data over this past summer to finally put my belief to the test, I have gained further conviction that my initial doubt was correct. I think that we’re thinking about aging in entirely the wrong way.

A Brief History of Thinking About Aging

I joined my first dynasty league in 2007. It was only eight years ago, but it might as well have been another century with how blithe everyone was towards aging stars. A 28-year-old LaDainian Tomlinson was the first selection, which is at least slightly understandable since he was fresh off potentially the greatest fantasy season of the last thirty years.

Less understandable with hindsight was Shaun Alexander, 30 and coming off of a disastrous season, going with the 16th pick in the draft. Or a 28-year-old Brian Westbrook going 8th.

At the time, though, such picks were hardly out of place. It was quite common to see 28, 29, and even 30-year-old running backs commanding high picks in startup drafts and premium compensation in trade. Workhorses were seeing a fantasy boom, and the community felt as if the bubble would never burst, as if Father Time wasn’t quite fast enough to catch this new breed of better, quicker, stronger backs.

Father Time laced up his cleats and quickly disabused us all of that notion.

When the bubble burst, it inspired a new round of research looking for patterns in the effects of aging on star fantasy performers. More and more owners started reaching back into history to try to find some sort of model for what the latter stages of an NFL career might look like.

From that research sprung the idea of aging curves, which quickly gained widespread popularity throughout the hobby. Imbued with the power to explain when a player would decline and by how much, they became indispensable tools in an owner’s fantasy tool belt.

Aging curves come in many forms, but at the root almost all will very closely resemble something like this, courtesy of the indefatigable Chase Stuart. A player enters the league at a young age, improves over the course of his first few years, peaks in his mid-to-late twenties, and then declines, (with the speed and severity being dictated largely by the position he plays).

There are many processes to arrive at an aging curve, but the simplest is merely averaging the production of players at certain ages. Another method, (the one used by Mr. Stuart), is comparing a player’s production one year to his production the year before and averaging the changes. One could chart total production by all players of a certain age. Each method will produce a slightly different curve, but they will all produce a curve.

The problem that bothered me the more I thought about aging, though? Actual NFL careers are very rarely curve-shaped.

Sometime around the 2010 season, with some corner of my mind perpetually worrying at the problem like a terrier with a stuffed rat, I ran across an offhand remark, (I’m quite unsure where), that the study of baseball pitchers also produced a similar age curve. Curiously, though, if you omit a pitcher’s final season, his curve becomes almost flat.

In 2011, Brian Burke of Advanced Football Analytics published his own contribution to the “aging curve” genre. After producing a couple fairly standard aging curves, he ends his post with a little twist. “One of the more interesting things in the numbers,” Burke writes, “was that the final year of a QB's career, regardless of age, is usually pretty bad, but not necessarily worse than the usual year-to-year variation in any individual QB's resume.”

Burke goes on to provide an aging curve for quarterbacks if you ignore their final season. Only this time, the “curve” is nothing of the sort. It is a stable plateau.

The pieces start to fall into place.

An Interesting Hypothetical

Let’s pretend for a moment that a witch doctor makes himself known to NFL players with an offer. He has a magic spell that will completely eliminate the effects of age, injury, overuse, or general wear and tear. In fact, he can make it so that a player is perfectly consistent from year to year with absolutely no variance in his performance.

Like all Voodoo curses, this one comes with a cost. Before the season, the witch doctor will take a sack of 100 white ping-pong balls. He will replace a single white ball with one black ball, mix them together, and draw one out. If he draws a black one, the player will suffer a career-ending injury in the first quarter of the first game.

If he draws a white one, however, the spell will continue for another year. The next season, he will take his sack of 100 white balls and replace twice as many with black ping pong balls— first two, and then four, eight, sixteen, and so on— before repeating the process.

Imagine that, upon turning 27, every receiver in the league takes him up on his offer. Imagine also that every ensorcelled receiver produces 100 Estimated Value over Baseline, (or EVoB, our value measurement of choice for the remainder of this article).

Now, in this new NFL, what would player aging look like? At age 27, there would be a single ping-pong ball, so 99% of players would produce 100 EVoB, while the unlucky 1% would produce 0. The average production of 27-year-olds would be 99 EVoB.

At age 28, 2% of receivers whose careers survived the age-27 drawing will wind up pulling a black ping pong ball, so the average production will be 98 EVoB. At 29, it falls to 96 EVoB. At 30, 92 EVoB; at 31, 84; at 32, 68. 33-year-olds would average 36 EVoB, and at age 34, any remaining survivors would find their luck had entirely run out, with nobody making it past opening day. If we plotted average production by age, we would produce a picture-perfect aging curve.

But is an aging curve an appropriate model for these hexed receivers? Of course it is not. Every single receiver is either producing 100 EVoB, or he is producing 0 EVoB. There is no “decline” at all. Any appearance of one is an illusion brought on solely by aggregation.

Another Model

Switching gears once again, let’s talk about life insurance.

For purchasers of life insurance, it represents a failsafe, a backstop, an assurance that no matter how bad things do get, they will not get as bad as they could. Life insurance provides the means, should unthinkable tragedy strike, to continue to afford housing, schooling, or anything else one might need but otherwise be unable to pay for.

For peddlers of life insurance, it represents something quite different: a financial investment. Life insurance is a product designed to create a profit. In order to do so, the offering company must carefully structure its bets so that it has more money coming in in the form of premiums than it has going out in the form of claims.

How does a company achieve this balance? It hires specially trained mathematicians called actuaries to study and categorize risk. And those actuaries create something called “mortality tables”, (or “life tables”, or “actuarial tables”). These mortality tables calculate the odds, if you are a certain age, that you will survive the year.

Life is a rather binary state of being. One does not “decline” in life. One is not 100% alive at 40, but only 99.8% alive at 41. Instead, if you are alive at 40, there is a 99.8% chance that you will still be alive at 41.

These mortality tables should self-evidently be a better way to model what’s going on in my silly Voodoo hypothetical. We’re not looking to measure average production so much as we’re looking to estimate the chances of a player “surviving” the year as a fantasy-viable asset.

That was just an absurd thought experiment, however. Does this have anything at all to do with the actual NFL, sans black magic? I’ve thought for some time that it did, and this offseason I finally put together enough data to put it to the test. And the results were encouraging, to say the least.

A Case For a Mortality Mindset

Pitting the “age curve” hypothesis against the “mortality table” hypothesis is relatively simple. Both models of aging make certain claims, and those claims are testable. I will quickly lay out two such claims and present the evidence.

First, an “age curve” implies that as players age, they become more likely to decline. To put this to the test, I pulled from my database the top 50 running backs and the top 50 wide receivers of the last 30 years in career fantasy value, looking only at retired players.

I defined a player’s last “fantasy-relevant” season as his last year with more than 20 EVoB. For some context, 20 EVoB last year was roughly on par with Malcom Floyd or James Jones at receiver and Darren Sproles or Chris Ivory at running back.

My question then was simple. Of the 100 players I looked at, how many produced less EVoB in their last fantasy-relevant season than they did in their second-to-last fantasy relevant season? Or, to put it another way, how many saw a decline in production the year before their fantasy career ended?

If improvement and decline was totally random, we would expect about 50% of the sample to have declined in their last year and 50% to have improved. If the aging curve model held weight, though, we would expect the declines to have been more common than the improvements. The curve suggests that players at the end of their careers trend downwards rather than upwards.

What do we see in the data itself? Of the 50 running backs, 28 declined in their final fantasy-relevant season and 22 improved. Of the 50 wide receivers, 22 declined in their final fantasy-relevant season and 28 improved. Out of the 100 players, the rate of decline was exactly 50%.

That’s just the final fantasy-relevant season, though. If we extend our look back two years, does anything change? If declines and improvements were truly random, we would expect about 25% of our sample to end their fantasy career with two consecutive declines.

Instead, just 11 of the 50 running backs and 6 of the 50 wide receivers declined in each of their last two fantasy-relevant seasons, a 17% rate.

And this is with a fairly generous definition of “decline”. In Herschel Walker’s last three fantasy-relevant seasons, he produced 109.04 EVoB, 104.24 EVoB, and 44.08 EVoB. Technically he declined in his second-to-last year, but most observers would consider going from 109 to 104 to be more a case of “holding steady”.

So the aging curve makes predictions that don’t mirror real-world results. This helps demonstrate why I’ve been uncomfortable with it for a while, but this does not prove that a “mortality table” approach is necessarily the right replacement. For that, we’ll run another test.

The mortality table approach tells us that, instead of general declines, there are two types of outcomes: surviving to the next year unscathed, or falling off of a fantasy cliff entirely. In order to explain observed patterns, two things must be true about players as they age. Just like in my Voodoo hypothetical, the rate at which players “die”, (or fall suddenly and unexpectedly from fantasy relevance), must increase with age. Second, the players who do not “die” should remain relatively steady. There can be variation, of course— some will do better, some will do worse— but that variation should be fairly randomly distributed so that the population average remains constant year over year.

Again, to test I’m going to need to define my parameters. I considered “death” to be a catastrophic bust: any player who either provided zero EVoB, or who saw their EVoB decline by at least 75% year-over-year is said to have “died”. Anyone else is considered a “survivor”. If a running back produces 150 EVoB one year and 30 EVoB the next, he counts as a “death”. If he produces 100 EVoB one year and 30 EVoB the next, he counts as a “survivor”, (albeit just barely).

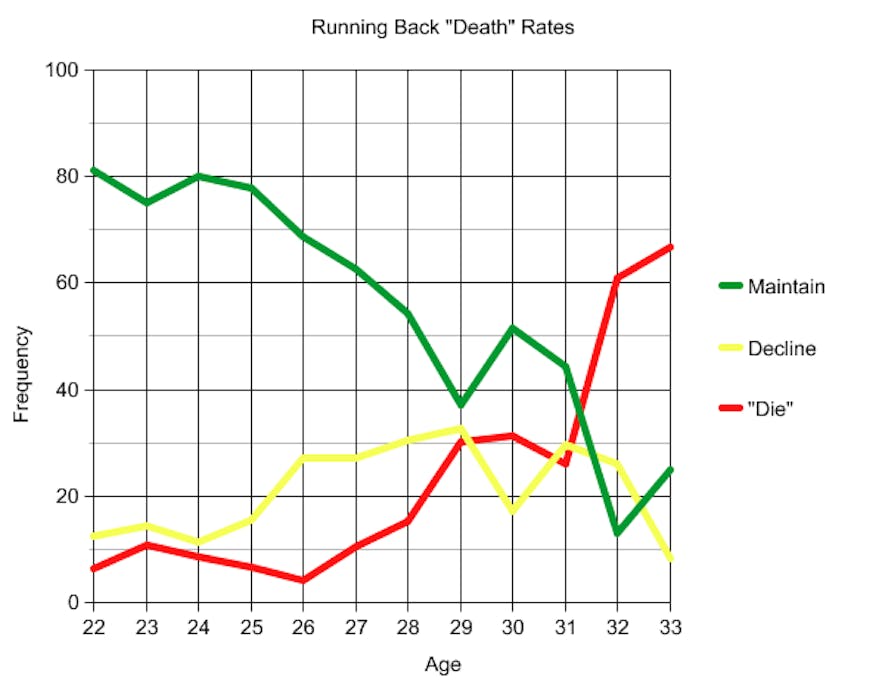

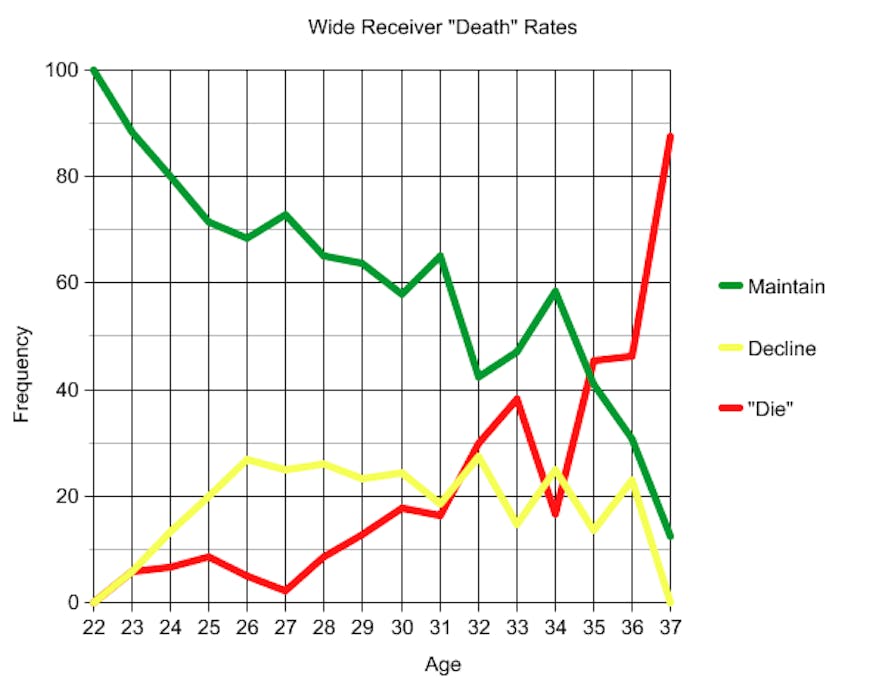

The mortality table hypothesis predicts that a chart of "death rate", (or the frequency with which players bust), vs. age should slope upwards. And, indeed, that’s exactly what we see. Here are the charts at running back and wide receiver. The red line is the one we are concerned with; that is the rate of catastrophic busts.

I also included a category called “decline”, (the yellow line in the charts). This includes all players who saw their EVoB fall by 25-75% from one year to the next. As you can see, the rate of players declining remains relatively flat across all ages, which once again cuts against the major premise of the “aging curve” model of player aging.

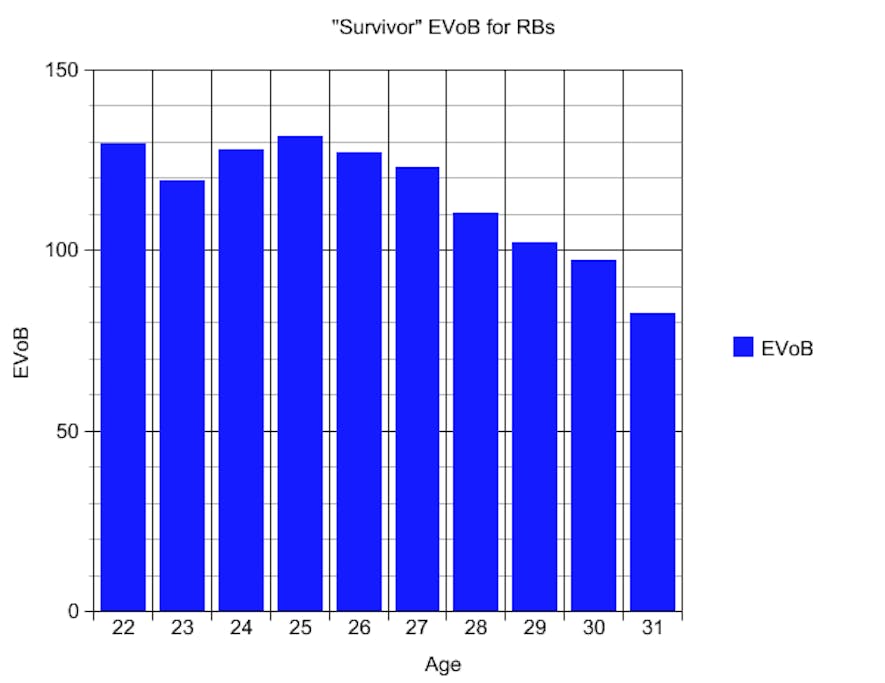

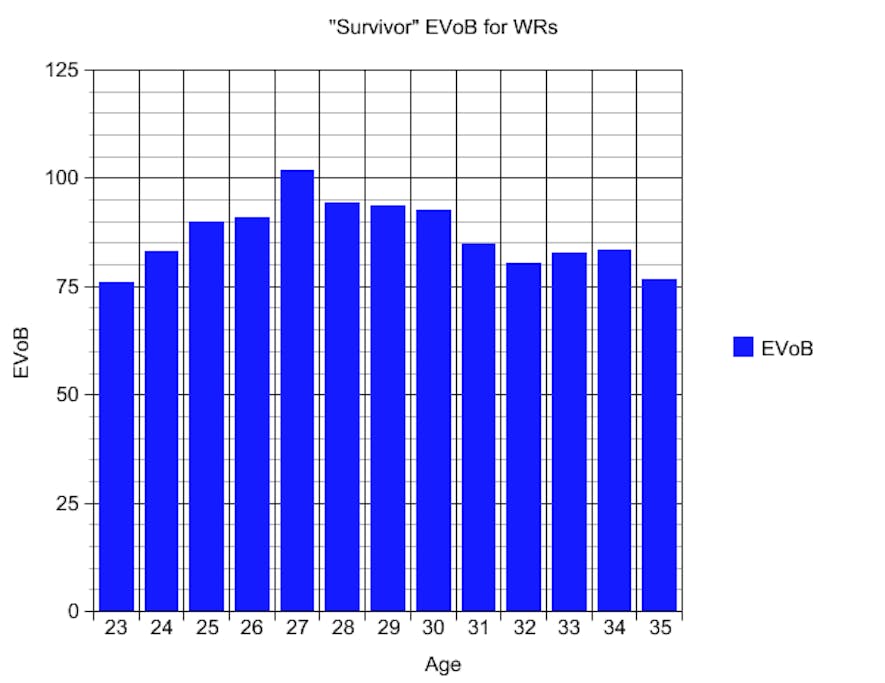

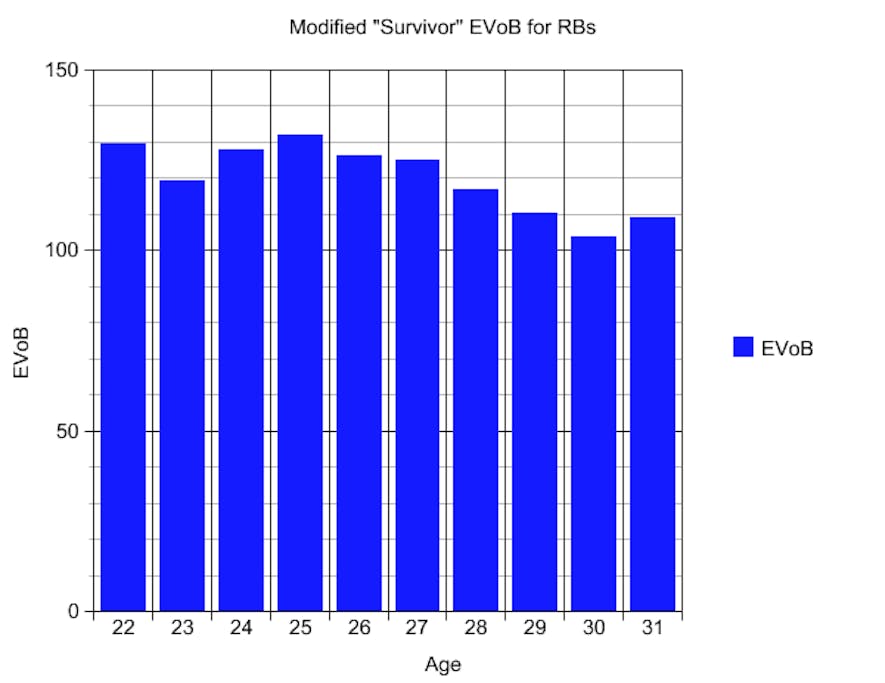

Finally, here is the average EVoB by age of all players who are not “catastrophic busts”. Essentially, this is the average fantasy value of “survivors”. You will notice that the wide receiver chart is almost completely flat, a total vindication of a “mortality table” approach. Receivers don’t decline, they hold steady unless and until they unexpectedly fall off a cliff, with the chances increasing every year.

The running back chart isn’t quite as perfect, with a slight tail-off among survivors as they age. Remember above how 28 out of the 50 backs declined in their final fantasy-relevant season, compared to just 22 out of 50 receivers? Running backs, it turns out, do tend to experience a mini-decline before they fall off the cliff entirely. If you remove their final fantasy relevant season in addition, the chart flattens out remarkably:

It seems the position might benefit from a hybrid mortality table / aging curve approach, with a table to estimate the chances of a player “dying”, but “dying” itself being considered a two year process with a moderate decline coming before the fantasy freefall.

Sharp-eyed readers might also notice that these "Survivor EVoB" charts do not go as far out as the "Death Rate" charts. The primary reason is one of sample size; for example, there were only seven "survivor" seasons among age 36 and 37 wide receivers combined. If you're unconcerned about sample sizes, the extended data does indicate a degree of decline even among the "survivors" at running back starting at age 32 and at receiver starting at age 36.

So What Does It All Mean?

This is all very fascinating, you might think, but what is the practical import? I would say that the policy prescriptions from each mindset would be noticeably different. An age curve might say you can expect to get 50% of “Typical Frank Gore” this year. A mortality tables mindset might suggest you have a 50/50 shot at getting 100% of “Typical Frank Gore”. The former labels Gore a very safe bet at RB2 production. The latter labels him a very risky pick, but one with RB1 potential.

This is emblematic of the root difference in approaches. Aging curves suggest that the aging process is, at its core, predictable. If only our approach is good enough and our data is robust enough, then we can anticipate coming changes well in advance. Mortality tables, on the other hand, model a process that is fundamentally unpredictable. It suggests a belief that dramatic busts can come at any time for any player, and they will arrive without forewarning or fanfare.

I like to say of my “mortality tables” mindset that it turns players into a series of coin flips. It’s as if the player flips a coin before the season, and if it comes up heads, he continues on completely unaffected. If it comes up tails, he falls off a cliff, never to be heard from again. As a player ages, the coin becomes more and more weighted towards tails, but each flip is fundamentally an independent event. As with a coin flip, the best we can hope to do is accurately estimate the rate of each outcome. It is beyond our capabilities to know with anything resembling certainty which will result.

Aging curves suggest an ordered and relatively homogenous world. They suggest that a generalization that applies to an entire group is likely to also apply to the individual members, which is an error known as an ecological fallacy. Done poorly, aging curves are also prone to display manifestations of survivorship bias, assuming players who performed well at an advanced age are somehow representative of the entire population, when in reality they're only representative of players who managed to survive in the NFL to such an advanced age.

Mortality tables neatly sidestep the survivorship bias issue, because predictions for each individual age group are all conditional on the player reaching that age in the first place. A mortality table doesn't say "this 26-year-old receiver is likely to play until he's 33". Instead, it says "if this 26-year-old receiver makes it to 32, then he'll be this likely to also make it to age 33". And if the receiver is not talented enough to make it that long in the NFL, he'll wash out of the sample like most of the other 26-year-olds who came before.

Mortality tables also allow for the possibilities of outliers like Jerry Rice or Walter Payton in a way that aging curves do not; while unlikely, there’s fundamentally nothing to stop a player from continuing to flip heads a dozen times in a row. And they allow for the possibility of even greater outliers in the future, however unlikely they might be.

The other advantage of a mortality table mindset is owners are less unprepared when a player declines “prematurely”. Clinton Portis was a terrific back, and yet his final fantasy-relevant season came at age 28. Terrell Davis was essentially done after age 26. Maurice Jones-Drew led the league in rushing at age 26, yet could barely crack the rotation for the Oakland Raiders at age 29. Domanick Williams was incandescent from age 23 to 25 and then never played another snap.

Players retiring or declining dramatically in effectiveness at an age when they should be ascending or peaking can catch owners who are expecting a nice curve completely by surprise. But thinking in terms of mortality tables introduces a freeing truth: there are not “safe players” and “risky players”. Every player is a risk, with the differences being only ones of degree.

What's Next?

In a series of follow-ups, I’ll take a position-by-position look at recent NFL history. I’ll show more charts on aging patterns, discuss inconsistencies in the data, and finally endeavor to create a functional mortality table to measure expected survival chances based on current player age. For now, I just wanted to introduce the concept and lay the groundwork for what is to come.

The embrace of aging curves represented a dramatic step forward in how the fantasy community valued players who were reaching or passing their prime. It helped prevent a lot of the most egregious errors that once plagued owners. As useful as they have been, however, I think that we, as a community, can do even better still.